Introducing Scalable Semantic Analytics Stack (SANSA Stack)

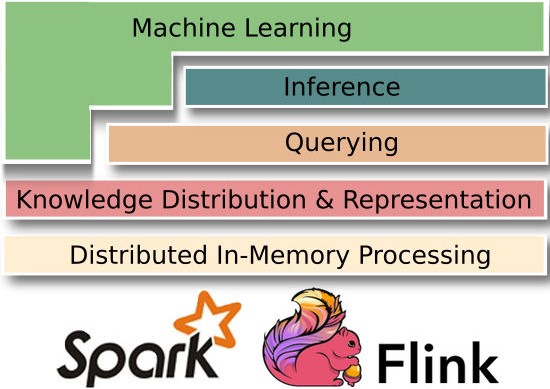

In order to deal with the massive data being produced at scale, the existing big data frameworks like Spark and Flink offer fault tolerant, high available and scalable approaches to process massive sized data sets efficiently. These frameworks have matured over the years and offer a proven and reliable method for the processing of large scale unstructured data.

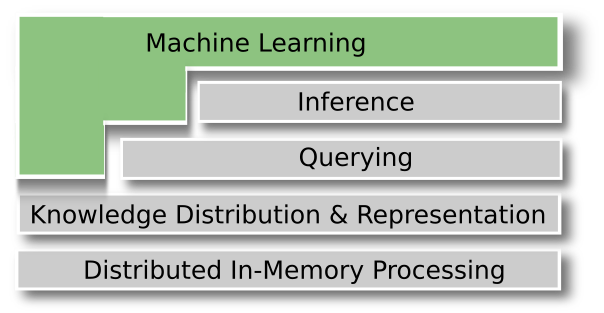

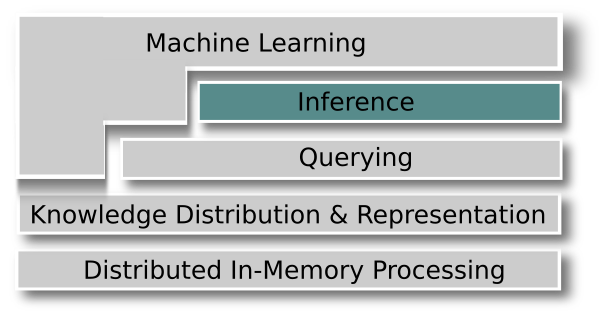

We have decided to explore the use of these two prominent frameworks for RDF data processing. We are developing a library named Semantic Analytics Stack SANSA, that can process massive RDF data at scale and perform learning and prediction for the data. This would be in addition to answering queries from the data. The following section describes working of SANSA and its key features grouped by each layer of the stack. Although we aim to support both Spark and Flink. However, our main focus is Spark at the moment, therefore, the following discussion would mainly be in the context of different features offered by Spark. Figure 1 depicts the working of the SANSA stack, where RDF layer offers a basic API to read and write native RDF data. On top there are Querying and Inference layer. Then, we would also offer a machine learning library that could perform several machine learning tasks out of the box on linked RDF data.

Architecture

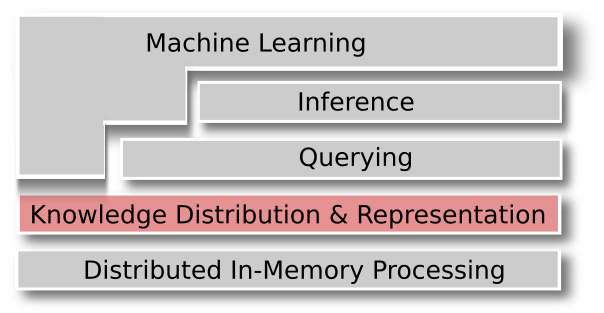

The core idea of SANSA-Stack is drawn from the valuable lessons that we have learned through the experience of big data tools and technologies and a lot of background knowledge over semantic web of data.

Figure 1. SANSA Layers

Read Write layer

The lowest layer on top of existing frameworks e.g. Spark or Flink is the read/write layer. This layer provides the facility to read/write native RDF data from HDFS or local drive and represent it in the distributed data structures of Spark.

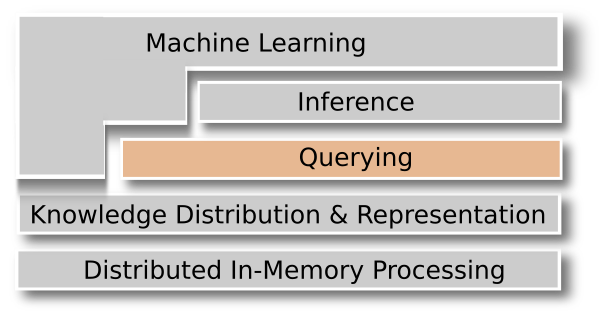

Querying Layer

Querying an RDF graph is a major source of information extraction and searching from the underlying linked data. SPARQL takes the description in the form of a query, and returns that information, in the form of a set of bindings or an RDF graph.

In order to efficiently answer runtime queries for large RDF data, SANSA offers three representation formats of SPARK namely Resilient Distributed Datasets (RDD), Spark Data Frames, and Spark GraphX which is graph parallel computation.

Inference Layer

This layer is relevant to more complex representations like RDFS and OWL that provide schema information as ontologies. An ontology provides shared vocabulary and inference rules to represent domain specific knowledge. One can perform sound and complete reasoning on small ontologies, however it can become very ineffective for very large scale knowledge bases.

We have developed a prototype inference engine and are working towards its refinement and performance enhancement.

Machine Learning Layer

We offer different algorithms namely, tensor factorization, association rule mining, decision trees and clustering for the RDF structured data. The aim is to provide out of the box algorithms to work with the structured data and a distributed, fault tolerant and resilient fashion.

Sansa is a research-work in progress, where we are exploring existing efforts towards Big RDF processing frameworks, and aim to build a generic stack, which can work with large sized Linked Data, offering fast performance in addition to working as an out of the box framework for scalable and distributed semantic data analysis.